|

While there might be some reason to hope for further updates to the MacBook Pro's firmware, the macOS Mojave's handling of this or Mathematica to squish bugs or provide workarounds, that is unsatisfying as it does not provide you to try something on your own. Internal management of the Intel chip may then take over what is not in Apple's control, given the hardware conditions and designs chosen. In this case it seems that the task is single-threaded and pretty taxing for the one core that has to handle this. All this can then be amplified by a bug in the software to run. Not only the machines are new, so is the OS Mojave. The i9 MacBook Pros were known to provide strange behaviour due to potentially high power consumption, temperature but not performance output, throttling. There are a few variables of unknown quality and quantity.

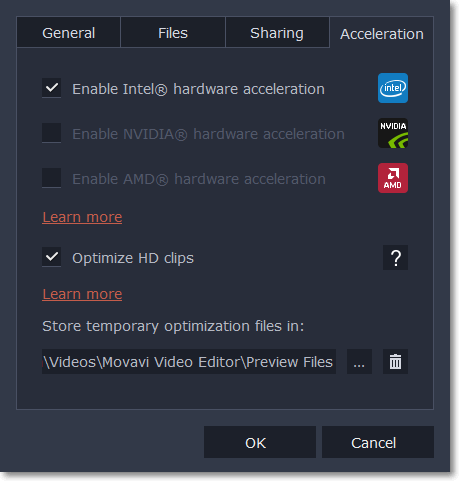

Also because there might be a different solution to it other than changing the frequency manually, especially if this is impossible to do. I am not sure about what is happening here, which is the reason I give longer description of the problem. Increasing the process' priority did not make a change. My suspicion is on OS, since CPU does not even reach to throttle and there is a clear process preference. I am absolutely sure the system could support higher CPU frequency during this, but it does not go for it. Mathematica task is long but does not change in nature, it multiplies big matrices over and over again just with different numbers.Īll of this is followed with fans running on higher than default speeds, because I increase them with the Control tool. It's like the OS does not allow higher CPU frequency for the "dangerous" thread, although the temperature can decrease eventually to <50C. Also the total core power increases, but only while this second process is running, as if the Mathematica process is forbidden to use CPU on higher clock. However, if I run another application on the side, the Intel Power Gadget can read higher core frequency (it shows just the average or the maximum, not per core/thread). After some more time (5-10 minutes) it will underclock for the second time, although the temperature is stable. The temperature reduces to ~60C, but until the end of computation this process never gets higher CPU nor Turbo Boost. The average temperature is probably 80C, but with Turbo Boost adjusting the clock, at some point temperature touches 100C and the CPU gets underclocked. At the start of computation the Turbo Boost is acting, the clock speed is higher and then after about a minute it abruptly reduces, simultaneously after the CPU temperature touches 100C. This might be Mathematica related, but so far I see no reason for underclocking so heavily. While running some specific calculations in Mathematica it happens that the CPU gets underclocked from 2.9G to 2.3G in serial and even to 1.5G during parallel computations. I use Intel Power Gadget and Macs Fan Control tools for monitoring the CPU and fans speeds on MacBook Pro 2018 with i9. Is this possible on new MacBooks and macOS ? Also, the M1 native Python install should generally be faster and help conserve battery.I would like to have any direct control of CPU core clocks. If you must, GPU acceleration will give you some tangible speedups. So, the lesson is, don’t run deep learning tasks locally on your laptop (duh!). The GPU accelerated batch dot product speeds up CPU version by more than 140x! Running the same comparison on my home workstation (RTX6000 GPU and Xeon2155 10c chip), I get the following results. Certainly there’s a performance boost, but nothing to write home about. Although, compared to the un-batched dot product on CPU, the GPU accelerated batch dot product is only 4.3x faster. After warm-up, it appears that the GPU accelerated batch multiplication is ~14x faster than the same CPU process on the M1 chip. So, I just ran the simple dot product speed test on this setup using MPS vs. You should also see from PyTorch that ‘MPS’ backend is available: If installed, properly, you should be able to see that Python 3.9 is running as an Apple process in resource monitor: To install PyTorch with Metal GPU acceleration: To install Anaconda with Apple-native Python:

However, I had to do a fresh install of Anaconda to get it to work. To make the process super easy, Anaconda also recently released an M1-native version. So, when PyTorch recently launched its backend compatibility with Metal on M1 chips, I was kind of interested to see what kind of GPU acceleration performance can be achieved. I typically run compute jobs remotely using my M1 Macbook as a terminal.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed